Scraping data from the web sounds simple in theory, but reality often proves more complex.

Sending too many requests from a single machine can trigger blocks, and scaling your data harvesting operations can be difficult due to website restrictions.

In these scenarios, web scraping proxies offer a solution. And in this article, we’ll explain how.

What proxies for web scraping are?

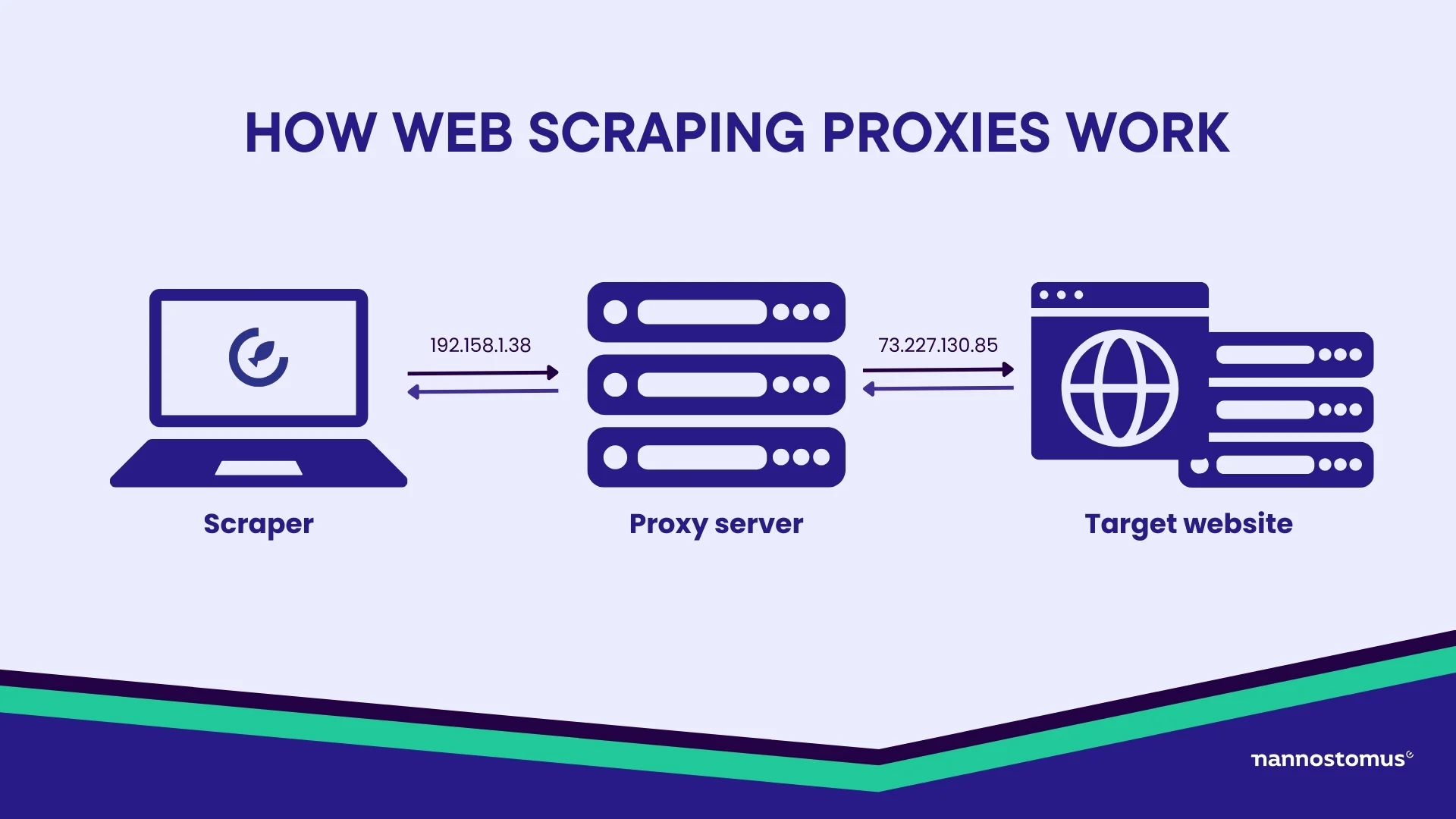

In its simplest form, a proxy server stands between you and the web. When you use proxies for web scraping, your requests to a website go through the proxy server first. The server then makes the request to the website on your behalf and brings back the information you need. In this process, your original IP address remains hidden, replaced by the proxy’s address.

When using proxies to scrape information, you mask your true identity and avoid being blocked by websites that monitor and restrict multiple requests from the same IP.

So, using proxies to scrape information helps you operate undetected, navigate web scraping restrictions, and successfully gather data that the web offers.

How do scraping proxies work?

As you scrape data from the web, the crawler makes many requests from a server from one IP address. Many websites have protective measures in place, which may block your IP address and stop you from information collection.

When you use proxy services for scraping, they mediate between the end user (you) and the target website from which you’re extracting data.

So, instead of making a direct connection, the request is first sent to the proxy server. This server then changes your IP address and forwards the request to the intended website on your behalf. The website sees the request coming from the proxy server’s IP address, not yours.

Here’s a detailed breakdown of how the flow works, step by step:

- Configure your web scraper to use a specific proxy type. Input the proxy details (IP, port, and authentication). The proxy configuration can either be static (fixed IP) or dynamic (rotating IPs), depending on the proxy service you’re using. If your scraping task involves logging in or maintaining sessions, your scraper has to manage cookies and tokens correctly, especially when proxies rotate.

- Assign an IP address through the proxy. If you’re using rotating proxies, the IP address will switch after each request or after a set number of requests. The system picks an IP from the proxy pool. The rotation schedule is managed by an algorithm that switches IPs at smart intervals.

- Send requests through proxies to the target website. To avoid detection, the scraper uses the right request headers (User-Agent, Referer), which mimic a real user’s browsing behavior. If your scraping requires keeping track of login sessions, cookies or session tokens are handled carefully so that they stay valid even when proxies rotate.

- Proxy routing and handling responses. The proxy forwards your request to the target website, and the website responds. That response goes back through the proxy, which then delivers it to your scraper. The request takes its usual path depending on the proxy type—forward proxies send requests directly, while reverse proxies may add extra steps for performance or load balancing.

Types of proxies for scraping

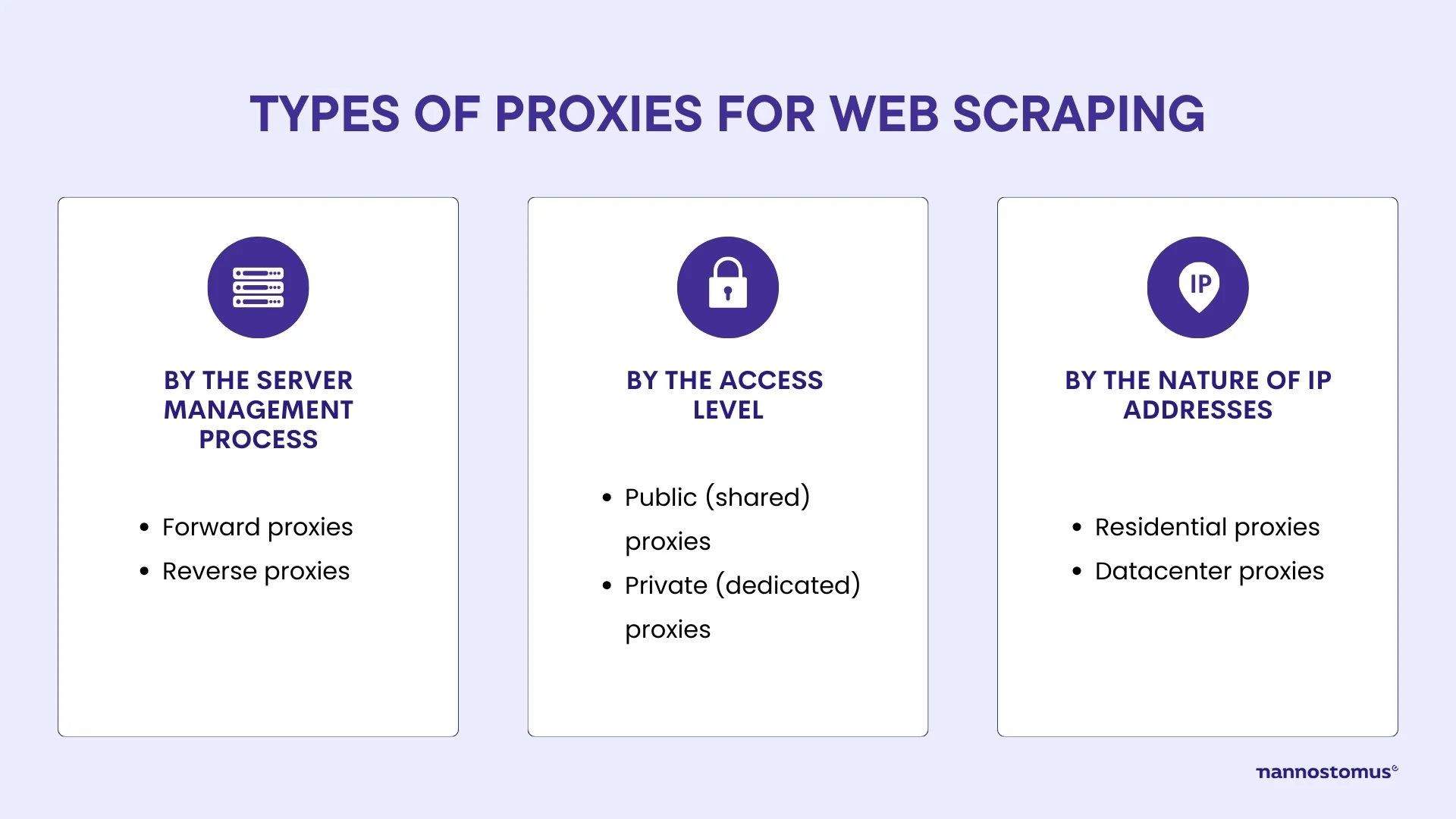

Different types of proxies are available on the market. The best proxy for scraping meets your requirements, budget, and the level of complexity of your tasks. We’ve grouped the major types of proxies by:

- Server management process

- Access level

- Nature of IP addresses

Proxies by the server management process

Proxies can either manage requests on the client side or handle them on the server side. Hence, there are two types of solutions on the market:

- Forward proxies

- Reverse proxies

Each offers benefits depending on your scraping objectives. So, let’s take a closer look at them.

Forward proxies

This type of proxy server sits between the client (your scraper) and the target website. When your scraper makes a request, it passes through the forward proxy before reaching the website, masking your original IP address with the proxy’s IP.

They’re entirely controlled by the client. You manage which proxy is used and how requests are routed. They provide full anonymity to the client, too.

Advantages:

- Full control over the proxies

- Anonymity for your scraping activities

- Ability to handle large-scale web scraping projects

Disadvantages:

- May require careful management and setup

- Can be less effective if the proxy pool is small

Forward proxies are most commonly used for large-scale web scraping projects where anonymity and request management are crucial.

Reverse proxies

A reverse web scraping proxy is positioned between the target website and the internet at large. Instead of hiding the client, it protects and manages access to the website.

Unlike forward proxies, reverse proxies focus on controlling who can access a server, filtering requests before they reach the actual target. They are often used by website owners rather than scraping engineers to protect against excessive traffic or attacks.

Advantages:

- Provides security for websites by controlling incoming traffic

- Can load balance requests to reduce server strain

- Offers protection from scrapers and bots

Disadvantages:

- Not typically used by scrapers but rather by websites

- Does not provide anonymity for the client

If you’re building your own scraping service and need to control access to your backend infrastructure, reverse proxies will handle incoming requests and distribute them.

Proxy for crawling based on the level of access

Proxies can also be categorized based on the level of access they offer. This determines how the proxy is used and shared among users. In this section, we’ll explore two main types:

- Public (shared) proxies

- Private (dedicated) proxies

Public (shared) proxies

These are proxies multiple users can access at the same time. They are often free or come at a very low cost. Thus, they are an attractive option for small-scale scraping tasks or one-off data collection efforts.

Since they are shared among multiple users, you can suffer from a slower performance and a higher chance of the proxy being blacklisted by websites. Because many users may be making requests from the same IP address, the risk of getting blocked is significantly higher.

Advantages:

- Free or low-cost option

- Easy to find and access

- Useful for small, low-priority scraping projects

Disadvantages:

- High chance of being blacklisted due to overuse

- Slow speeds due to multiple users sharing the same proxy

- Unreliable for large-scale or long-term scraping operations

Public proxies for web scraping are suitable for low-priority projects or when you only need limited amounts of data. They can be useful for scraping less-protected websites where speed and reliability are not major concerns.

Private (dedicated) proxies

These are exclusively used by a single user or organization. This means the proxy’s IP address is not shared with anyone else.

This exclusivity provides a much higher level of control, reducing the risk of IP bans and ensuring more stable connections during the scraping process. Private proxies also offer better security, as they are less prone to misuse or malicious activity.

Advantages:

- Faster and more reliable connections

- Lower risk of being blacklisted

- More secure, with reduced risk of malicious activity

- Ideal for large-scale or long-term scraping projects

Disadvantages:

- More expensive than public proxies

- Requires proper management to maintain efficiency

Crawler proxies by the nature of IP addresses

Proxies can also be classified based on the nature of their IP addresses. The two main types are:

- Residential proxies

- Datacenter proxies

Residential proxies

Residential proxies use IP addresses assigned to real devices—home computers or mobile phones—by internet service providers (ISPs). They appear as genuine users, so they are less likely to be detected and blocked by websites.

The key feature of residential proxies is high credibility and authenticity. However, they can be slower and more expensive due to their limited availability and the fact that they come from real users’ IPs.

Advantages:

- Highly trusted and less likely to be blocked

- Ideal for scraping sensitive or well-guarded websites

- Provides better anonymity as it mimics real user behavior

Disadvantages:

- More expensive than datacenter proxies

- Slower performance due to limited availability

- Requires careful management to ensure optimal performance

These web scraping proxy services are ideal for extracting data from websites with strict security measures—e-commerce platforms or social media sites—where anonymity and appearing like a regular user are essential. They provide a high level of trustworthiness since the IPs are tied to real devices.

Datacenter proxies

Datacenter proxies come from large data centers. These proxies are not linked to any physical location or residential internet connection.

The standout characteristic of datacenter proxies is their speed and cost-effectiveness. However, because they originate from data centers and not real devices, they are easier to detect and block by websites, especially those with advanced anti-scraping measures.

Advantages:

- Faster and more cost-effective than residential proxies

- Ideal for large-scale, high-speed data collection

- Readily available in large quantities

Disadvantages:

- Easier for websites to detect and block

- Less secure and anonymous than residential proxies

- Not ideal for scraping sites with strict security measures

Thus, they are suitable for large-scale, high-speed web scraping operations where cost-efficiency and speed are prioritized over anonymity. They work best when scraping websites with lower security measures that do not aggressively block non-residential IPs.

Key factors for choosing the best proxy for web scraping

As data demands increase, so does the use of proxies in web scraping—about 7% of proxy traffic is tied to scraping activities, and that’s just rotating proxies. But not every proxy will give you the results you need.

Some might leave you blocked after a few attempts. Others are too slow to keep up with your data needs. Worse yet, some might not be reliable, so your scraping tasks become a real headache.

We’re here to make sure you don’t run into those problems. In this section, we’ll help you figure out what to look for in a proxy solution.

Anonymity

A high level of anonymity means the proxy masks your true IP and mimics genuine user behavior. That’s exactly what you need to avoid detection and ensure your scraping activities remain under the radar, even with advanced anti-bot systems in place.

- Residential proxy servers for web scraping, for example, excel in this area because they use IPs assigned to real devices by ISPs. Thus, they appear as legitimate users from specific locations. This is especially useful when scraping sites that are highly sensitive to bot-like behavior or have strict geo-blocking rules.

- Datacenter proxies, while faster and more cost-effective, tend to offer lower levels of anonymity. Their IP addresses originate from data centers. So, they’re easier to detect and block by sophisticated web defenses. These proxies work well for less secure websites, but for high-security targets, they can raise red flags and get your scraper banned quickly.

Another factor to watch for is whether the proxy leaks any identifying information through headers. For example, through the ‘X-Forwarded-For’ header, which could expose your original IP. A good proxy should strip away any such identifiers and handle encryption properly to ensure all requests look like they’re coming from genuine users.

Rotating proxies also play a key role in maintaining anonymity by distributing requests across multiple IPs. This prevents any single IP from making too many requests in a short time, reducing the chance of detection. However, if the rotation is poorly managed—if you’re cycling IPs too frequently or too predictably—it can also trigger bot defenses.

Ultimately, if your web scraping involves sites with high security measures, choosing proxies that offer strong, undetectable anonymity—residential proxies or well-managed rotating proxies—is essential to ensure long-term success.

Speed and performance

The performance of a proxy largely depends on its bandwidth and latency. For web scraping, you want proxies that offer high bandwidth and low latency.

Datacenter proxies generally outperform residential proxies in this area, as they are designed for speed and can handle a higher volume of requests at a much faster rate. This makes datacenter proxies ideal for scraping tasks where performance is a priority and the target site has fewer anti-scraping defenses.

However, performance isn’t just about raw speed. You also need to consider concurrency—the ability to manage multiple requests at the same time. Proxies that support high concurrency enable you to scale your scraping tasks. This is particularly important for large-scale scraping projects, where hundreds or thousands of requests might need to be sent simultaneously.

Another factor that impacts speed and performance is proxy location. Geographically close proxies tend to offer faster response times because the data doesn’t have to travel as far. For example, if you are scraping data from servers in Europe, using European proxies will result in faster response times compared to proxies located in North America or Asia. However, the trade-off here is that websites may detect and block multiple requests coming from the same region if you’re using a limited proxy pool.

It’s also important to assess the uptime and stability of your proxies. A high-speed proxy is only as good as its reliability. You need proxies with near-perfect uptime (99.9%) to ensure your scraping tasks aren’t interrupted. A good proxy provider should offer a robust infrastructure with load balancing mechanisms that prevent overload and maintain consistent speeds under pressure.

Geolocation options

The ability to control the geographic location of your proxy IPs allows you to bypass regional blocks, gather localized content, and gain insights tailored to particular markets. However, leveraging geolocation options requires a deep understanding of how different proxy types and locations affect scraping outcomes.

- Residential proxies typically offer the most robust geolocation options, as they are tied to real devices located in specific regions. If you’re scraping e-commerce sites, for example, the pricing and product availability usually vary between countries or regions within a country. A residential proxy allows you to appear as if you’re accessing the website from the desired location to be able to gather the right data.

- Datacenter proxies may offer limited geolocation options, as they are not associated with real ISPs or physical addresses. These proxies are usually grouped in large clusters within data centers. And while you may have the option to choose from different regions, the range of available locations is often narrower compared to residential proxies. This can be problematic when targeting websites with strict geo-restrictions, as datacenter IPs are easier to detect and block, especially if many requests come from the same region or location.

When selecting a web scraping proxy server provider, you should look for services that offer global coverage. Here’s what to look for in terms of geolocation options:

- IP pool diversity

- City-level targeting

- Compliance with regional regulations

- Avoiding regional blacklists

Pool size

The proxy pool size refers to the total number of unique IP addresses a provider offers for use.

A large proxy pool is especially important for scraping operations that involve high-frequency requests. When you’re sending thousands—or even millions—of requests to a target site, reusing the same IP addresses too frequently can trigger anti-scraping mechanisms that block your access. A larger pool allows you to distribute these requests across many IPs, reducing the likelihood of rate-limiting, CAPTCHAs, or outright bans.

The size of the pool also directly affects anonymity and IP freshness. If you’re rotating proxies, you want to ensure the IPs are frequently changed to avoid detection. Proxies that cycle through a limited pool of IPs can quickly become ineffective, as websites may detect repeated use of the same addresses. A larger proxy pool gives you access to a wider range of IPs, making it harder for websites to correlate your scraping activity with a specific source.

IP rotation

The first factor to consider is how often your IP addresses should rotate. If your proxies switch too quickly (e.g., after every request), it may look suspicious, as human users typically maintain a single IP address for a longer period. On the other hand, rotating too slowly might result in detection. This timing will often depend on the target site’s security measures.

Effective IP rotation requires a degree of randomness. If IPs are rotated in a predictable sequence, anti-scraping algorithms may detect a pattern, flagging and eventually blocking your proxies. Randomizing both the choice of IP addresses and the timing of the rotations helps avoid this.

A key consideration in IP rotation is how you manage sessions. Some scraping tasks require maintaining a persistent session with the target website. For instance, when scraping data that requires login credentials. In these cases, using sticky sessions—where the same IP is maintained for a specified duration or until the task is completed—ensures session continuity. If IPs are rotated too frequently during a session, you may be logged out or your session might break, which can disrupt your scraping efforts.

Pricing models

Proxies come with various pricing structures. The right model depends largely on the scale of your scraping efforts, the level of anonymity required, and the overall performance you need.

| Type | Description | Best for | Pros | Cons |

|---|---|---|---|---|

| Pay-per-IP | You pay based on the number of unique IP addresses you use. Each IP is yours for a set period, usually monthly. | Small-scale projects where consistency is important. | Easy to manage and predict costs. | Can get expensive if you need a lot of proxies or frequent IP changes. |

| Pay-per-traffic | You’re charged based on the amount of data your proxies transfer (measured in GB). | High-volume scraping that requires rotating many IPs. | Unlimited IPs, scalable based on how much data you use. | Costs can vary depending on your data needs, so it’s important to monitor usage closely. |

| Pay-as-you-go | You only pay for what you use, whether that’s a set number of proxies or a certain amount of data. | Short-term or on-demand scraping tasks. | No long-term commitments, and you pay only for what you use. | Costs can be higher per IP or GB compared to subscription models. |

| Subscription | A fixed monthly or yearly fee for a specific set of proxies or data limits. | Continuous, large-scale scraping projects. | Predictable costs, often with added features like automatic IP rotation. | Less flexible—you're locked into a plan even if your needs change. |

| Custom | These plans factor in data volume, geographic diversity, and features (API access). | Large enterprises with unique scraping requirements. | Fully customized. | Can be more expensive and require negotiation. |

Finding the right pricing model for the best web scraper proxy server depends on your project’s scale, how much data you need to scrape, and how frequently you’ll need to rotate IPs.

Why is it essential to use web scraping proxies?

Proxy servers have many uses, but full-scale web scraping would be impossible without it. Here are the key reasons why using proxy for scraping.

First, is the anonymity they provide. As we said earlier, proxies mask your IP address so that your scraping activities remain undetectable. Also, they remain uninterrupted since these proxies change the IP address frequently. So, if scalability is a concern for you, proxies will enable you to make numerous concurrent requests to speed up the data extraction process.

Beyond providing anonymity and scalability, scraping proxies also balance the load of requests. Websites often have mechanisms in place to limit the number of requests an IP address can make within a specific timeframe. If this limit is exceeded, the IP address could be temporarily or permanently blocked. Scraping proxies distribute requests across multiple IP addresses. This way, they prevent detection and ensure uninterrupted data extraction.

Some websites display different content or restrict access based on the visitor’s geographical location. As you use a proxy server located in a specific region, you can bypass these geo-restrictions and scrape data regardless of its geographic availability.

Conclusion

If you are tired of getting blocked while you fetch data online, the best proxies for web scraping come to the rescue. They will ensure uninterrupted web information extraction, even on a large scale.